Computing Musical Mood at Gracenote (2016)

A wrapped archive of my 2016 write-up on Mood 2.0, Gracenote's deep learning mood classification system.

Current Context

I wrote the original post in August 2016 while working in Gracenote's Applied Research group. It captured a practical shift from older statistical models to deep learning for large-scale music metadata.

I am preserving the original below because it reflects an important stage in my work on machine listening.

Source archive: Wayback copy of original post

Original Post (August 9, 2016)

Research has shown that when we listen to music, it impacts the way we perceive the world. We can encapsulate this phenomenon as musical mood, which is an alignment of the stylistic elements of music with human emotion. This inherent connection between music and mood provides a natural framework for organizing and discovering music. While musical genre is a useful tool for the same task, it is a patchwork of descriptors that can make exploring music difficult for the uninitiated. Someone searching for a song may not connect to a genre labeled "Alternative Pop Rock" without prior exposure. However, a mood labeled "Soft Tender / Sincere" is more fundamentally easy to understand.

As a company responsible for processing, storing, and distributing much of the world's music metadata, Gracenote has a difficult task in determining the mood of hundreds of millions of songs and a unique opportunity. With the ever-larger music catalogues of our customers such as Apple and Spotify, the only feasible way to grab this bull's horns is with computation. But how can a computer begin to comprehend the complex harmonies, melodies, and rhythms that construct a musical mood?

Enter AI and Deep Learning

As a forward-thinking data company, one of the ways Gracenote has invested in innovation is through its Applied Research group, which develops new technologies that fuel future products. This article describes one recent return on this investment: a mood classification system called Mood 2.0 powered by Artificial Intelligence and Machine Learning.

Mood 2.0 is an update to Mood 1.2, our existing mood classification system that currently enables mood-based music applications around the world. The new iteration features a significantly more refined mood space (436 mood labels for Mood 2.0 vs 101 mood labels for Mood 1.2) and delivers a 33% increase in performance over Mood 1.2. Where Mood 1.2 uses Gaussian Mixture Models, Mood 2.0 uses Deep Learning via multi-layer neural networks.

To train a model to compute the mood of a song, several hundred mathematical values (features) are calculated from the audio signal. Each feature captures some aspect of mood such as rhythm or harmony. Then these features are passed through a neural network, which outputs a probability distribution over 436 moods. This output is compared against known ground truth labels, and network parameters are updated iteratively. After many training iterations the network can estimate mood for unknown songs.

Some Tech

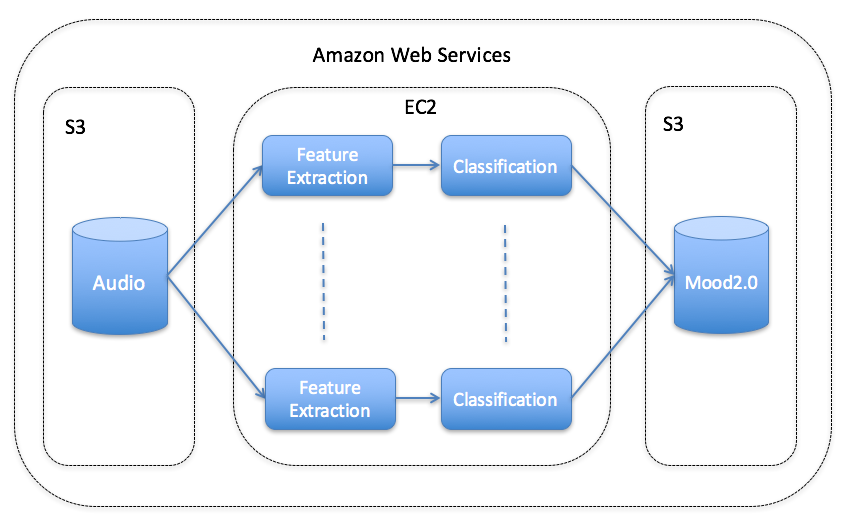

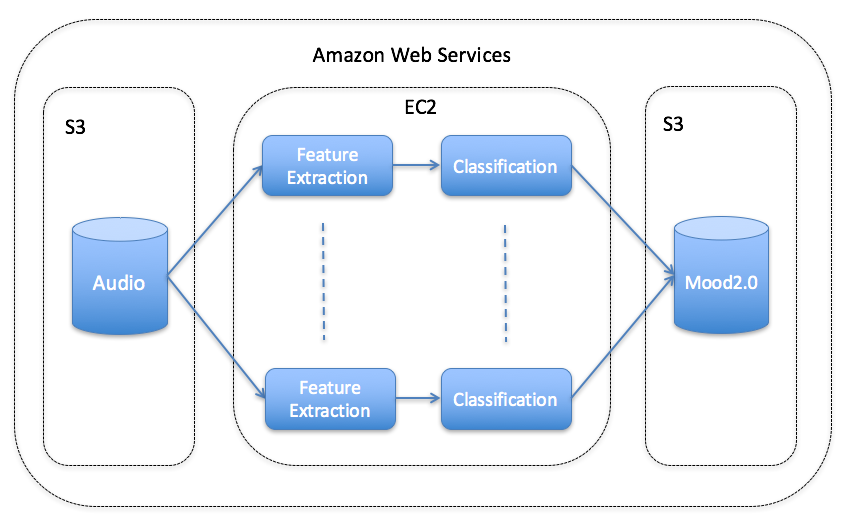

Training required substantial data and compute. We trained using multiple GPUs (Nvidia GeForce GTX TitanX, 6GB RAM) to speed iteration. Training code was written in Python, with Scipy/Numpy and Theano/Lasagne. In production, the trained classifier ran in AWS for scalable parallel processing of large music catalogs.

Figure 1. Mood 2.0 classification system parallel architecture.

Form Factor

A song can express multiple moods, and Mood 2.0 captures this with a mood profile. The profile is a vector computed by post-processing the neural network's probability distribution, representing the presence strength of each mood in the song.

Table 1. Example Mood Profile for "Give It Away" (Red Hot Chili Peppers)

| Mood Label | Score |

|---|---|

| Loud n' Scrappy | 38% |

| Wild Loud Dark Groove | 24% |

| Urgent / Frustrated Pop | 15% |

| Tightly Wound Excitement / Positive Frustration | 9% |

| Anger / Hatred | 6% |

| Teenage Loud Fast Positive Anthemic / Melodic | 3% |

| Alienated Anxious Groove | 2% |

Table 2. Top Computed Moods for Selected Tracks

| Track | Artist | Top Moods |

|---|---|---|

| Locked Out Of Heaven | Bruno Mars | Carefree Soaring Bliss Party People Groove (0.439); Edgy Dark Fiery Intense Pop Beat (0.329); Latin Boom Boom Sexy Party Trance Beat (0.165) |

| Giant Steps | John Coltrane | Dark Energetic Abstract Groove (0.425); Lively "Cool" Subdued / Indirect Positive (0.303); Happy Energetic Abstract Groove (0.149) |

| (You Make Me Feel Like) A Natural Woman | Aretha Franklin | Slow Strong Serious Soulful Ballad (0.402); Sad Soulful Jaunty Ballad (0.234); Bare Emotion (0.177) |

| Tears In Heaven | Eric Clapton | Tender Lite Melancholy (0.406); Sober / Resigned / Weary (0.221); Soft Tender / Sincere (0.16) |

| Basket Case | Green Day | Loud Fast Dark Anthemic (0.277); Gothic Haunted Overdrive Beast (0.167); Aggressive Crunching Power (0.167) |

| Stupify | Disturbed | Aggressive Evil (0.319); Gothic Haunted Overdrive Beast (0.206); Anger / Hatred (0.179) |

| Believe | Cher | Power Boogie Dreamy Trippy Beat (0.286); Passionate Dark Dramatic Fiery Groove (0.221); Dark Gritty Sexy Groove (0.182) |

| Slide | Goo Goo Dolls | Positive Flowing Strumming Serious Strength (0.358); Dark Loud Strumming Ramshackle Ballad (0.196); Loud Overwrought Heartfelt Earnest Bittersweet Ballad (0.184) |

| All Summer Long | Kid Rock | Ramshackle Jaunty Rock (0.387); Whatever Kick-Back Loud Party Times (0.255); Sassy (0.226) |

| Can It Be All So Simple / Intermission | Wu-Tang Clan | Dark Cool Calm Serious Truthful Beats (0.479); Kick-Back Dreamy Words & Beats (0.202); Flat / Speech Only (0.119) |

| You're Still A Young Man | Tower Of Power | Soulful Solid Strength & Glory (0.367); Slow Strong Serious Soulful Ballad (0.300); Poseur Earnest Uplifting Ballad (0.143) |

| Georgia On My Mind | Ray Charles | Sweet & Tender Warm Mellow Reverent Peace (0.633); Tender Sad (0.197); Dreamy Romantic Lush (0.089) |

Bird's Eye

In first efforts to transition Mood 2.0 into product, we generated Mood 2.0 on the first million tracks. Below were some of the most common computed moods:

- Dramatic - Strong Emotional Vocal

- Dramatic - Strong Positive Emotional Vocal

- Bitter

- Power Dreamy Beat

- Serious Measured Powerful Emotive Tenderness

- Dismay / Awfulness / Bad Scene

- Flat Dance Groove - Mechanical

- Lyrical Romantic Bittersweet

- Romantic Dark Energetic Complex

- Tender Lite Melancholy

Where Next?

Future work included modeling mood as a timeline through each song, enabling one-to-one experiences such as synchronized mood lighting. We also explored interactions between mood and other metadata attributes (genre, language, origin, era) to improve discovery and understanding at global scale.

by Cameron Summers | August 9, 2016